Tactile Modality Fusion for Vision-Language-Action Models

Preprint 2026

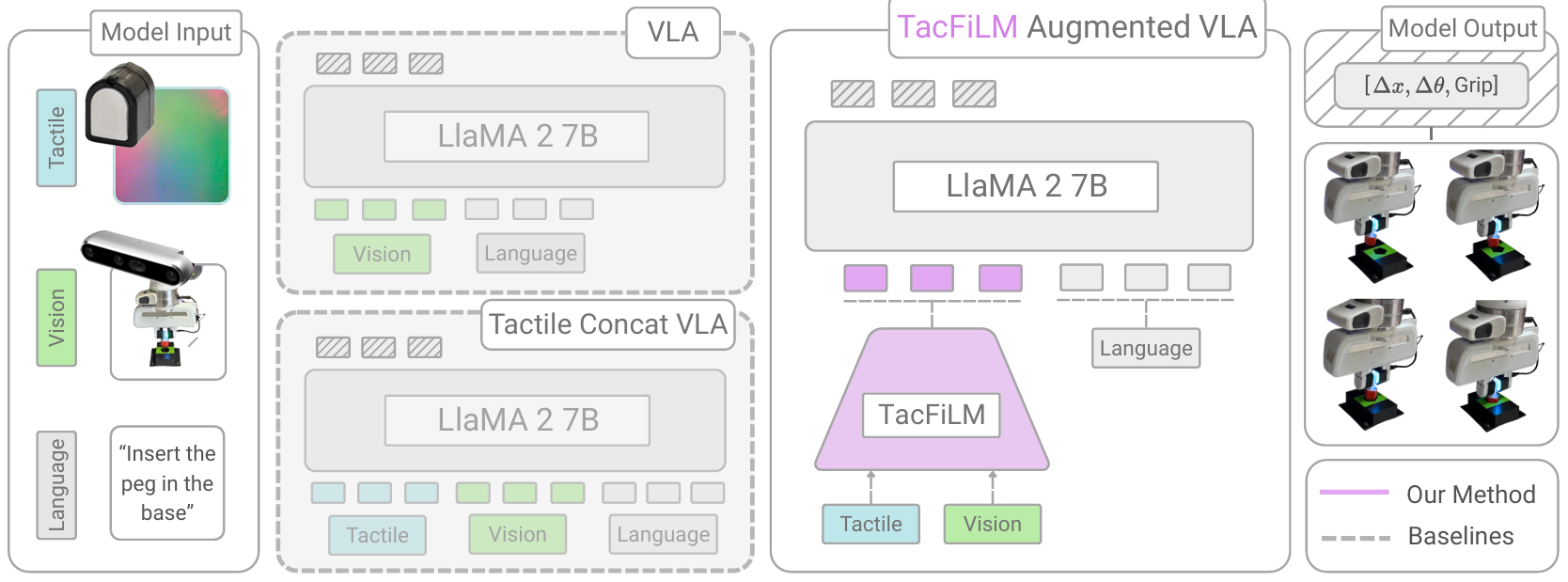

We introduce TacFiLM, a lightweight method to incorporate tactile feedback into VLA models. By conditioning visual features with tactile representations via FiLM, robots can feel what they cannot see: contact forces, friction, and compliance. This leads to better performance on contact-rich manipulation tasks.